Agent Dexter

My little attempt at writing my own local AI agent

I was inspired by Gemini Live, which allows you to speak to an AI agent, and Claude Code, where user interactions takes place via a terminal, and I thought to myself, “hmm… Shouldn’t be too hard to build something simple which can do both myself, right?” As you can probably guess by now, the answer is “wrong!” But you can also guess that I was foolhardy and naive enough to not realise this and to go along with the idea of building my own AI assistant, Dexter. Otherwise I would not be writing this post. So here goes…

Before we go further…

In this post, I will be going through what Dexter can and cannot do from a user’s perspective as well as some of the key learnings and challenges encountered while writing Dexter.

For more technical details, see the code repo here.

Regarding the use of AI, this post is written entirely “by hand”. During the process of building Dexter, AI (Gemini 2.5 and Claude Sonnet 4) were consulted. I would say >95% of the code was “hand-written” (see here for more details). You might ask why not use AI to write the code? Isn’t that what everyone is doing? Well, this is a hobby project for me to learn and sharpen my skills. Where’s the fun if everything is done my “someone” else?

Who is “Dexter”?

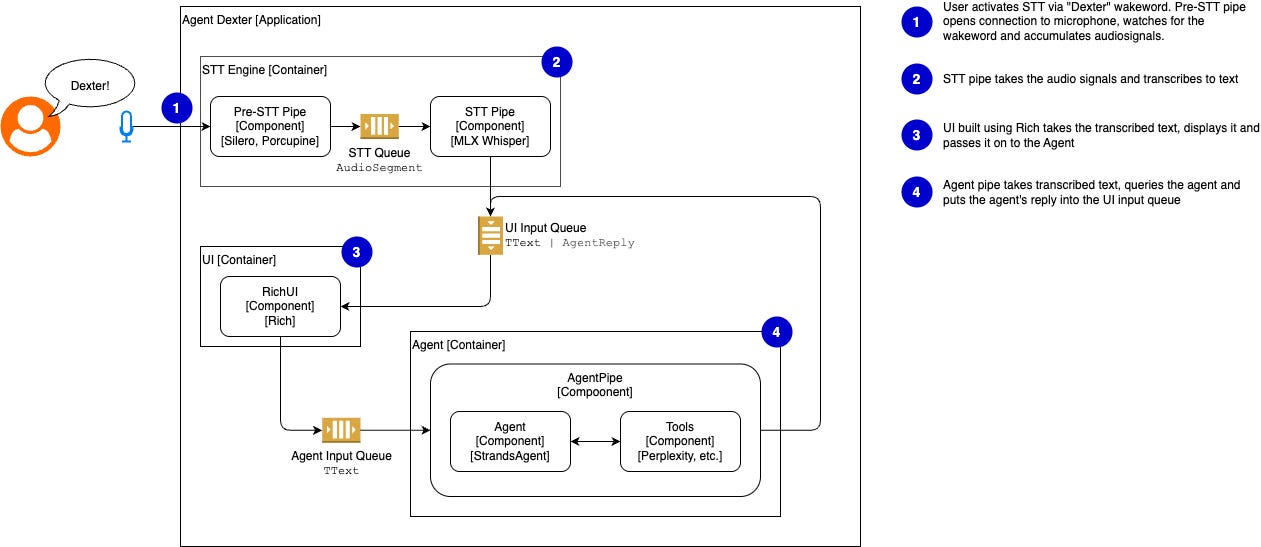

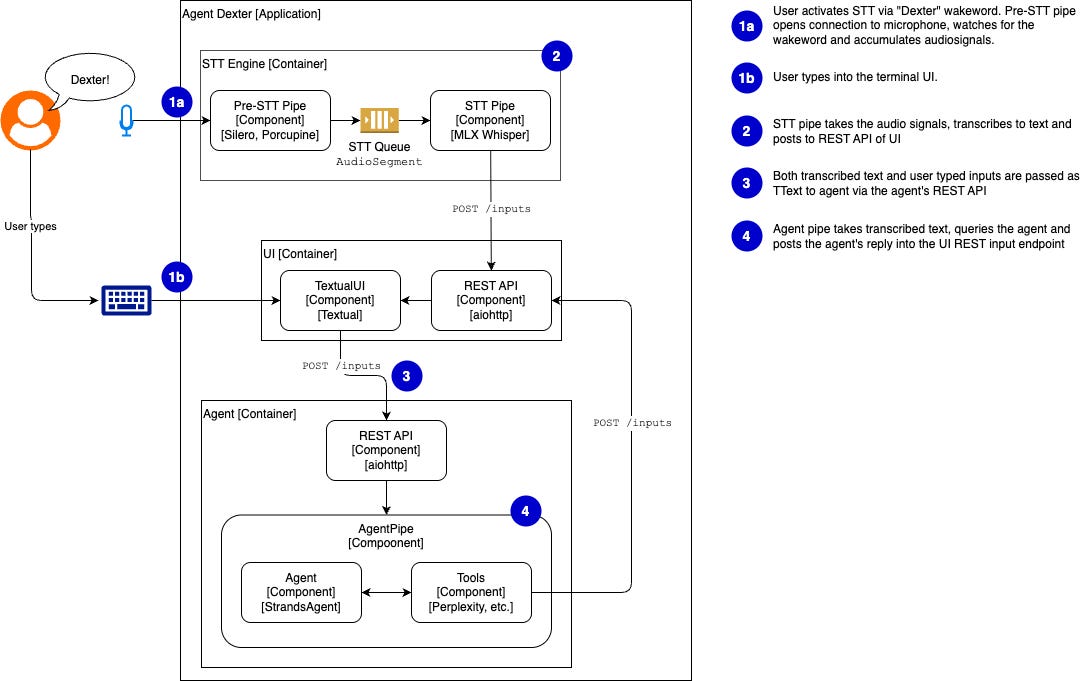

Agent Dexter, is an AI agent that runs in my own computer. It has a terminal frontend so that I can type my questions and supports voice input so that I can wake it up with the wake word “Dexter”, ask my question verbally. My queries are then be handled by an AI agent which uses a Large Language Model (LLM) to decide whether to answer my question directly or use tools available to it to answer my queries.

While Dexter runs on my computer, the key intelligence comes from the LLM which resides on AWS’s servers that are queried using AWS Bedrock. So, if you intend to play around with it, know that not everything takes place on your computer.

Another thing to note is that, at present, Dexter only runs on a Mac. To make “him”1 run on a Windows or Linux machine, you’d probably have to make some changes to the code or accept that you cannot interact with Dexter via voice commands.

What can Dexter do?

Dexter has two operation modes: voice and full. See instructions on invoking the two modes here.

Voice mode

In voice mode, you can wake up “him” up by saying “Dexter” and then asking your question.

Full mode

In Full mode, you can either wake Dexter up as in voice mode or you can directly type your queries into the terminal.

The user experience in full mode is still a little laggy as I’ve not implemented streaming inputs and outputs.

Key Learnings & Challenges

AI agents enable flexibility in user interactions

One thing that was obvious to me is that AI agents enable user interactions that can be quite fluid and flexible. I could sort of imagine how if I were to build functionalities like searching for news and performing general tasks like adding 3 to 5 in the past, I’d have to do so with some buttons or drop-downs or tabs. Now, AI agents powered by language understanding from LLMs allow me to just speak and get things done.

Of course whether this flexibility is desired in the first place, depends on what you are building. I can imagine cases where you don’t want too many free flow conversations, and you just want to guide the user down a “narrow path”.

Tools make agents useful but effort required in orchestration and prompting

Another nice thing with AI agents is that you can put tools in “their hands” and it would immediately be “integrated”. For example, in this little project, I wrote a simple search_news tool that queries Perplexity. All I had to was to tell Dexter that this tool is now available and it would be used the next time I start Dexter up.

In a separate project that I did concurrently, I also tried using MCP servers integrated to the Strands Agent framework that I used for Dexter. The integration process was rather painless too.2

That being said, again speaking with experience from other projects involving AI-assisted coding, while effort maybe reduced when it comes to modifying code to integrate new functionalities, effort is need to prompt the AI agent properly so as to ensure that the agent’s behaviour and way of doing things is consistent over time.

Conventions are starting to emerge in the AI agent world

I’m also noticing that conventions are starting to emerge in the AI agent world over time. For example, dictionaries with a more or less standardised format (such as {“role”: “user”, “content”: “…”}) in keeping a record of the conversation between the AI agent and the user are being used by different frameworks.

Though I wouldn’t call this phenomenon a “standard” yet as I feel that things will change very quickly in the AI world.

Building Dexter’s speech-to-text capabilities was fun

I started out wanting to use RealtimeSTT for the STT engine, as it included wakeword detection and voice activity detection (VAD) out of the box. However, it does not support Mac GPUs and running it on CPU was just too slow. That’s why I had to go with MLX Whisper and implement wakeword detection and VAD myself.

The experience of going through researching different frameworks and working it into an application was rewarding.

Overcoming terminal race conditions

If you look at the way voice mode is run, you would see that everything is run in a single Python program with queues handling data sharing.

However, when I tried to do the same for full mode, where the user can also type into the terminal (like Claud Code), I ran into a whole lot of trouble as a result of race conditions coming from the various running processes competing for the terminal’s “attention”.

In fact, this was the key challenge that took up half the development time, by my estimation. I had to give up in the end and go for the approach where I have 3 separate processes communicating over HTTP.

This is the key learning for me on the coding front this time round.

Final Reflections

Dexter was a fun little hobbyist project to dip my toes into the world of writing AI agent-enabled applications. While I have to admit it took more time than I thought it would and struggling with the terminal race conditions was a huge pain, it was fun figuring out how to integrate STT with Dexter. And maybe Dexter will become a platform for me to explore other Agentic AI functions such as integration with a knowledge graph or a persistent memory layer for AI agents.

You would notice that I refer to Dexter with the pronoun “him” in quotes. This is because agentic AI has brought about the phenomenon where people deal with AI as if it were a person. But I’m still cautious about over anthropomorphising AI.

I didn’t use MCP servers for Dexter but I don’t think they will be hard to implement under the Strands Agents framework. Do not that there are potential security concerns with MCP servers.